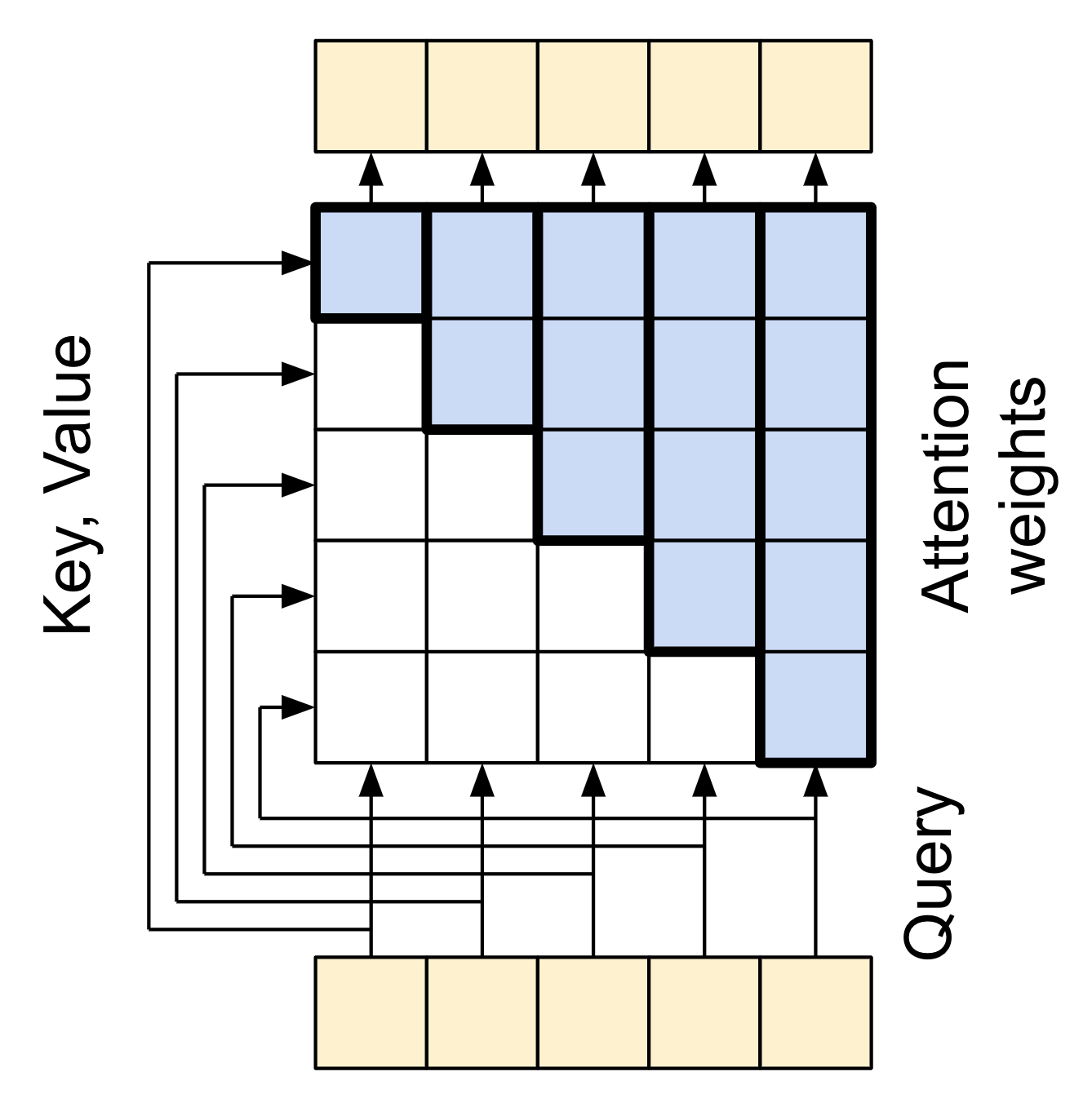

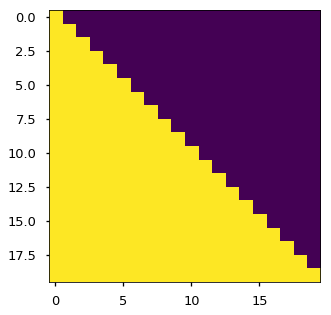

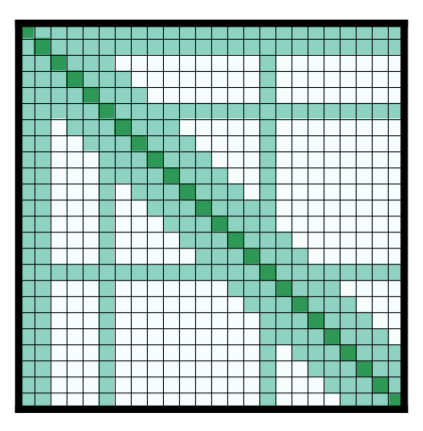

Four types of self-attention masks and the quadrant for the difference... | Download Scientific Diagram

Attention Wear Mask, Your Safety and The Safety of Others Please Wear A Mask Before Entering, Sign Plastic, Mask Required Sign, No Mask, No Entry, Blue, 10" x 7": Amazon.com: Industrial &

The Illustrated GPT-2 (Visualizing Transformer Language Models) – Jay Alammar – Visualizing machine learning one concept at a time.

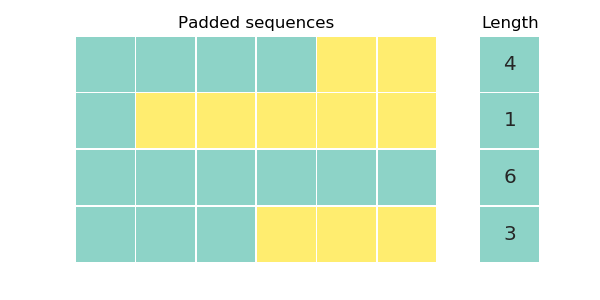

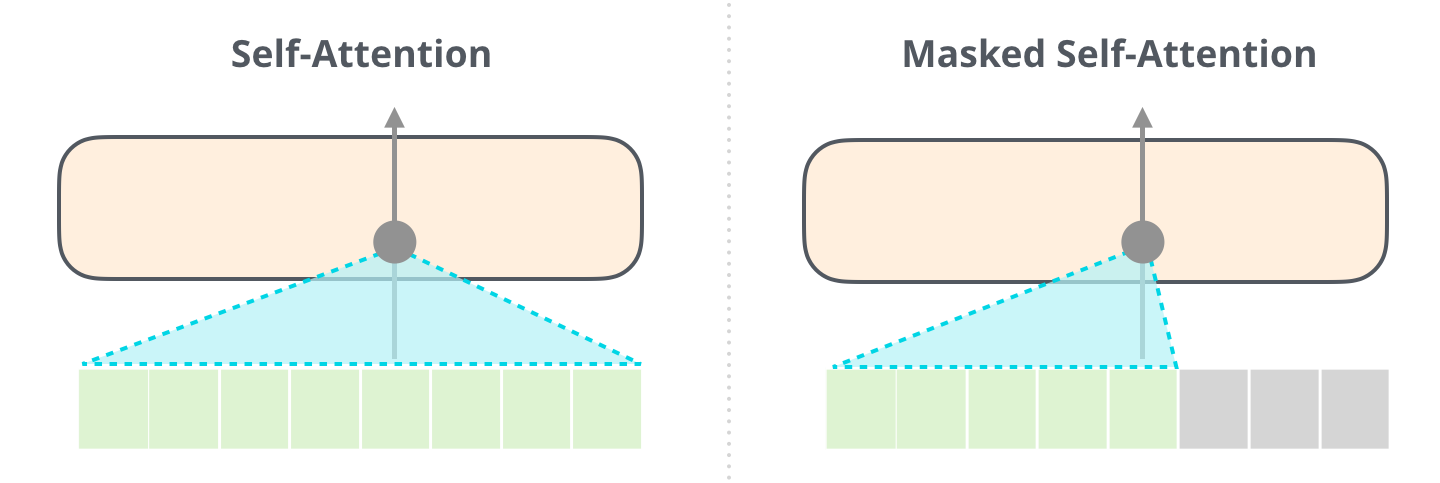

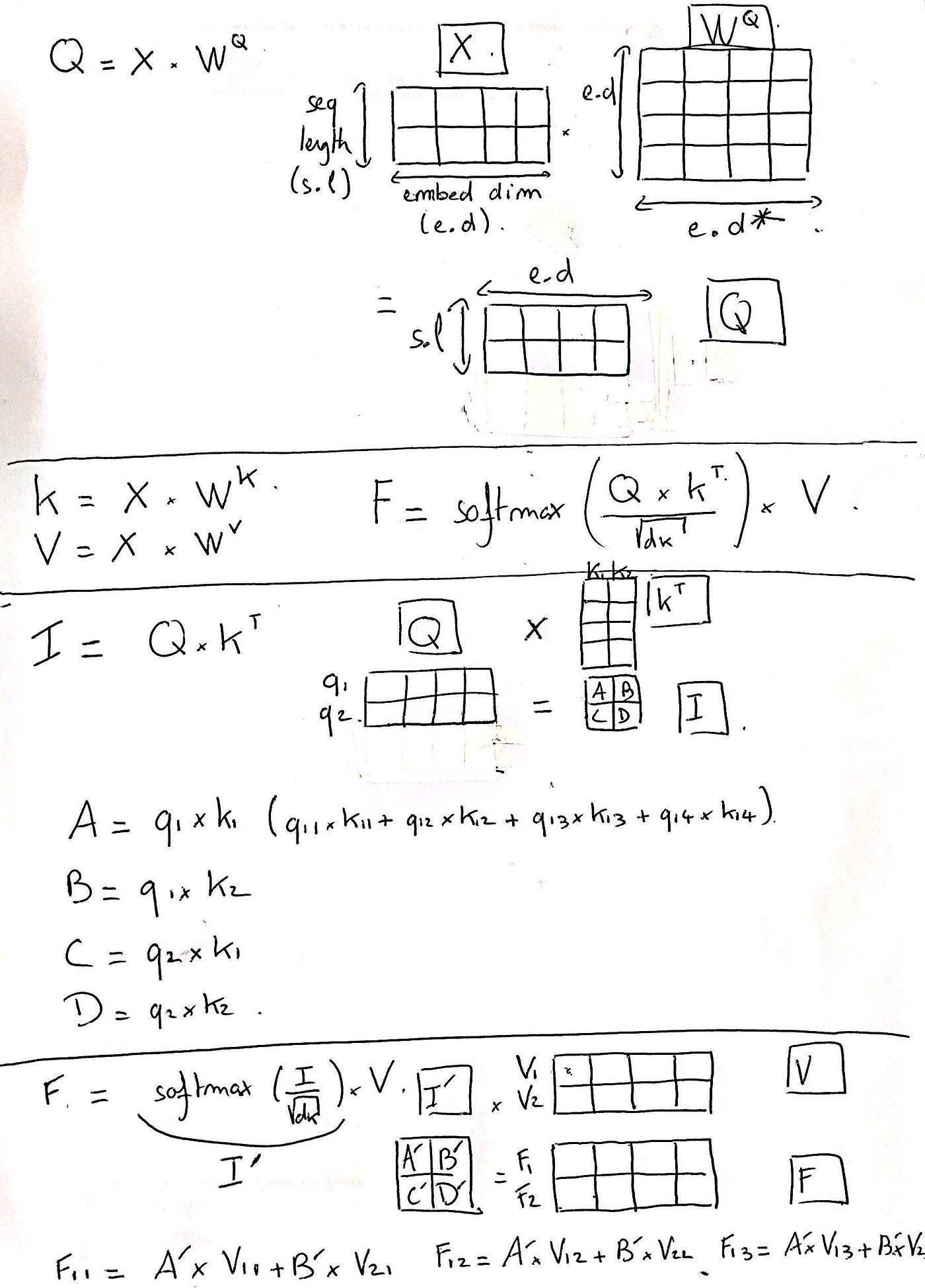

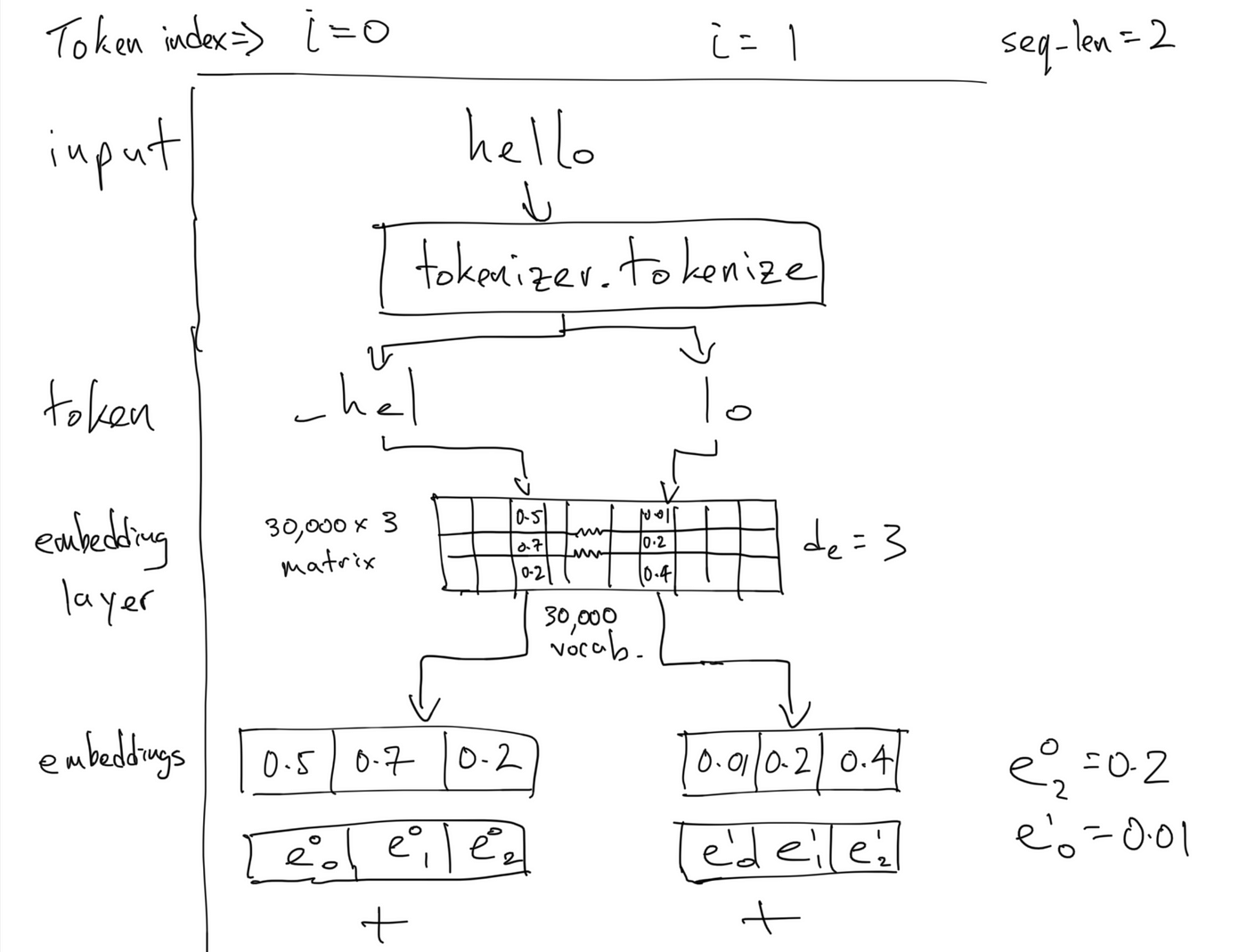

Masking in Transformers' self-attention mechanism | by Samuel Kierszbaum, PhD | Analytics Vidhya | Medium

Illustration of the three types of attention masks for a hypothetical... | Download Scientific Diagram

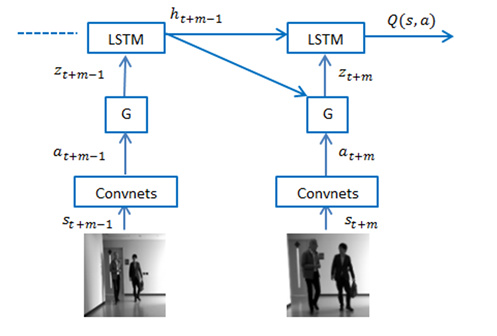

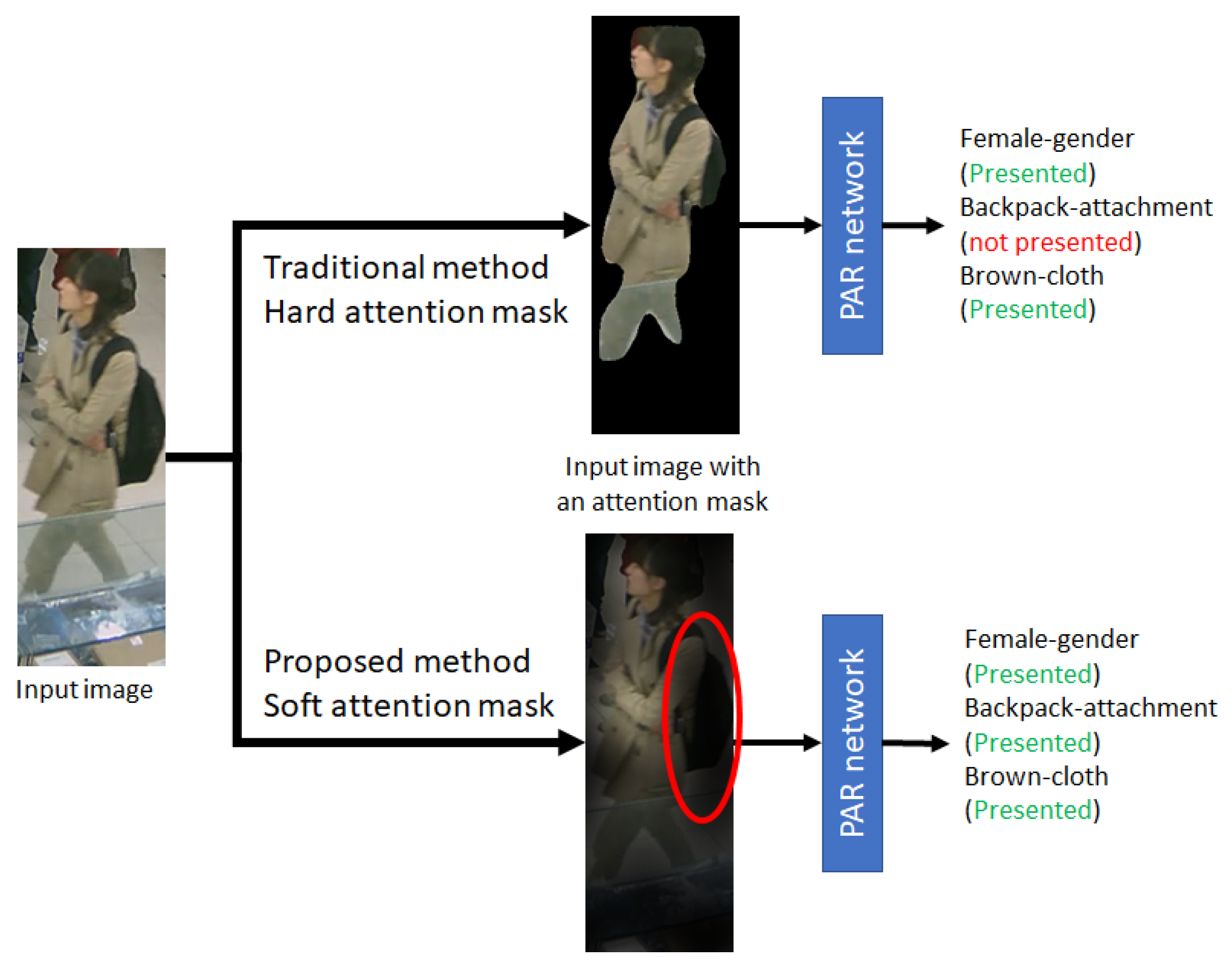

J. Imaging | Free Full-Text | Skeleton-Based Attention Mask for Pedestrian Attribute Recognition Network

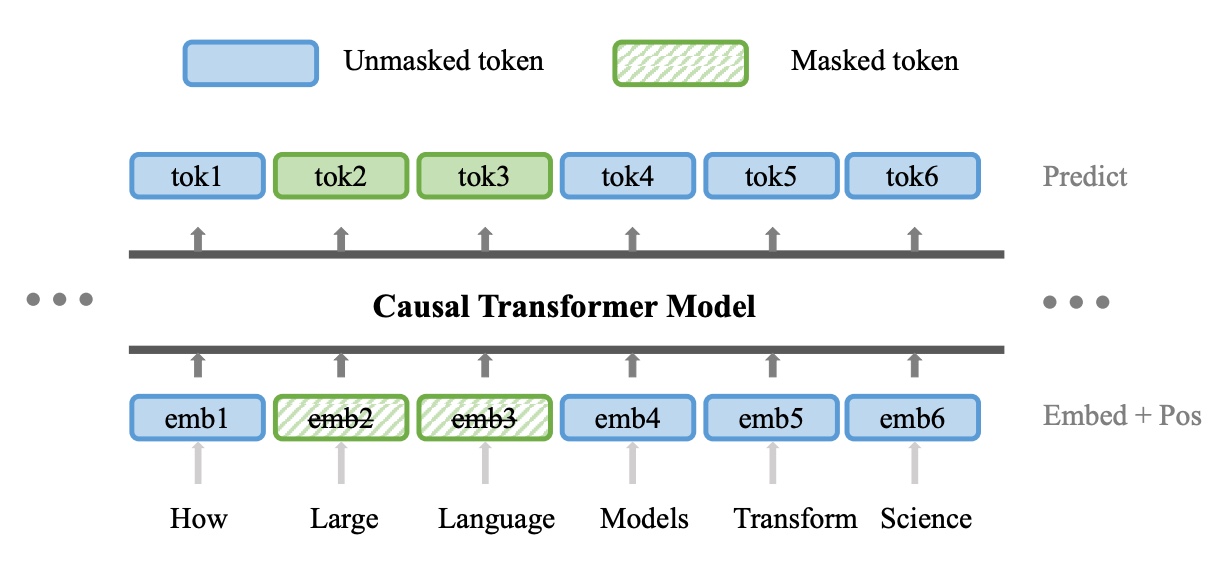

Hao Liu on Twitter: "Our method, Forgetful Causal Masking(FCM), combines masked language modeling (MLM) and causal language modeling (CLM) by masking out randomly selected past tokens layer-wisely using attention mask. https://t.co/D4SzNRzW06" /

How to implement seq2seq attention mask conviniently? · Issue #9366 · huggingface/transformers · GitHub

![D] Causal attention masking in GPT-like models : r/MachineLearning D] Causal attention masking in GPT-like models : r/MachineLearning](https://preview.redd.it/d-causal-attention-masking-in-gpt-like-models-v0-ygipbem3cqv91.png?width=817&format=png&auto=webp&s=67002a5b7c32166020a325feaa4a8abaa86dc7cc)

![PDF] Intentional Attention Mask Transformation for Robust CNN Classification | Semantic Scholar PDF] Intentional Attention Mask Transformation for Robust CNN Classification | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/99ae91d9532216b585e7d273b8c7fac2d18f7bcd/3-Figure2-1.png)